Table of Contents

eeClust

Goals

The goal of the eeClust project (Energy-Efficient Cluster Computing) is to determine relationships between the behaviour of parallel programs and the energy consumption of their execution on a compute cluster.

Based on this, strategies to reduce the energy consumption without impairing program performance will be developed. Project partners are the University of Hamburg (coordinator), Dresden University of Technology (TUD/ZIH), ParTec Cluster Competence Center GmbH, and the Jülich Supercomputing Centre of Forschungszentrum Jülich GmbH.

In principle, this goal can be achieved when for as many as possible hardware components their energy saving mode can be activated for the periods of time when they are not used. Modern hardware and operating systems already use these mechanisms based on simple heuristics but without knowledge about the execution behavior of the applications currently executing. This naturally has a high potential for wrong decisions.

The project will develop enhanced parallel programming analysis software based on the successful Vampir (Dresden) and Scalasca (Jülich) software tools which in addition to measuring and analyzing program behaviour will be enhanced to also record energy-related metrics.

Based on this new energy efficiency analysis, the users can then insert energy control calls into their applications which will allow the operating system and the cluster job scheduler to control the cluster hardware in an energy-efficient way. The necessary software components will be developed by Hamburg and ParTec.

The effectiveness of the proposed strategy will be evaluated with the help of a small cluster testbed with special energy measurement and control components and synthetic and realistic benchmarks which also need to be developed in the course of the project.

Contact: Dr. Timo Minartz

Project Terms

- Funded by the German Ministry of Education and Research (BMBF) under grant 01IH08008E

- Call “HPC-Software für skalierbare Parallelrechner”

- April 2009 – March 2012

Project Details

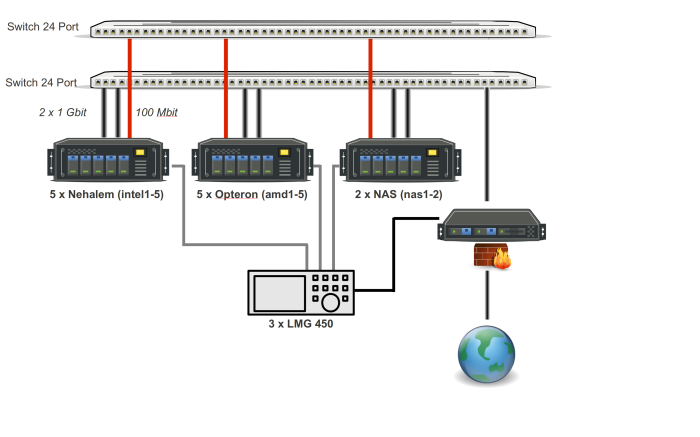

Hardware

The initial step is to build a power-aware cluster, whose hardware support multiple operating and idle states. Our cluster consists of five dual socket Intel Nehalem (Xeon X5560, 4 cores + SMT) and five dual socket AMD Magny-Cours (Opteron 6168, 12 cores) computing nodes.

To measure the power consumption of the hardware, each node and the Gigabit switch are connected to ZES LMG450 high precision power meters with a accuracy of 0.1%. The power consumption of each node is stored in a database on the head node, to whom all power meters are connected via serial ports. The cluster nodes are installed with OpenSuse as operating system, Parastation as cluster management system and VamirTrace, Vampir and Scalasca as tracing and trace analysis tools.

Trace Generation

The first task is to extend VampirTrace to integrate the energy characteristics from the database, which is being done offline after the application is finished. The resulting OTF file contains the performance characteristics of the parallel program and the resulting power consumption which can be analyzed with Vampir and Scalasca.

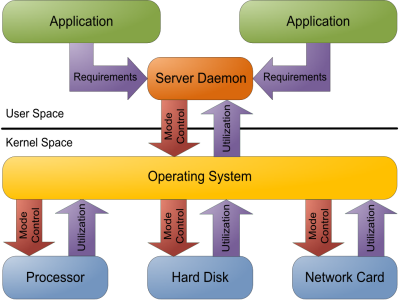

Managing Hardware Power Saving Modes

To optimize the application power consumption, code regions can be executed in different hardware device states. For this purpose, a server daemon manages the requirements of different, instrumented applications and decides about the power saving modes of the hardware components.

Finally, the hardware device states and the energy characteristics can be visualized using the GridMonitor.

Evaluation

One of project goals is to design and implement an energy efficiency benchmark that is able to characterize the power consumption of clusters. This benchmark should have the ability to selectively stress individual components and thereby investigate the systems ability to effectively limit the power consumption of underutilized units.